How To Outrank LLMs When AI Writes Half The Internet

Human content outranks AI-generated content by doing the three things language models cannot: proving first-hand experience, providing original data, and building verifiable author identity. Despite AI now producing roughly half of all new articles on the web, 86% of top-ranking pages on Google are still human-written. AI engines themselves cite human content 82% of the time. The advantage isn’t disappearing. But you have to know how to use it.

I’m Competing Against Machines. And So Are You.

Let me tell you how this felt.

I run a family travel blog called Nomadmum.com. I started it in 2019 after leaving my journalism career in Germany and moving to Thailand with my family. For years, I wrote honest, detailed articles about destinations, hotels, and life abroad with kids. I built real traffic, real readers, real income.

Then one day I searched for something I had written about — a topic I literally lived — and the top results were AI-generated content from sites that had never set foot in Thailand. Generic, smooth, perfectly structured. And ranking above me.

That’s the moment I realized: the game isn’t just about beating other bloggers anymore. It’s about beating machines that can publish 50 articles in the time it takes me to write one.

But here’s the thing. Once I understood what makes human content win — and started doing those things deliberately — the results shifted back. Not because Google penalizes AI content. But because Google, ChatGPT, and Perplexity all prefer content with signals that AI simply cannot produce.

This post is everything I’ve learned about outranking LLMs as a solo blogger. The data is real. The strategies are specific. And they work.

The Numbers: AI Content Is Everywhere But It’s Not Winning

Let’s start with what the data actually says.

A Graphite study analyzing 65,000 URLs found that AI-generated articles now account for roughly 50% of all new content published online. That number went from 5% pre-ChatGPT to half in under two years. The flood is real.

But here’s the part nobody talks about: AI content isn’t winning the rankings. That same study found that 86% of top-ranking pages on Google are still human-written. Among AI assistants like ChatGPT and Perplexity, 82% of cited sources are human-authored content.

And it’s not just rankings. Consumer preference for AI-generated content has dropped to 26%, down from 60% three years ago. People can tell. They don’t trust it. They don’t engage with it the same way.

Pure AI content that reaches Google’s top 10 does so in only 28% of cases. Making it to the top 3? Just 6%. But when humans edit AI drafts — adding experience, insights, original data — those hybrid pieces rank 34% higher than unedited AI content.

The takeaway is clear: volume doesn’t beat value. AI can flood the internet, but it can’t flood the top results. Not yet.

Why AI Content Keeps Hitting A Ceiling

AI-generated content fails in ways that are invisible to the person who published it but obvious to search algorithms.

No experience signal. Google’s E-E-A-T framework starts with Experience. AI cannot visit a hotel. Cannot taste a dish. Cannot know that the beach path in Koh Phangan gets flooded during rainy season. Every article it produces is a synthesis of what others have already published. Search engines are getting better at detecting this, and when they do, the content gets filtered.

No original data. AI regurgitates. It doesn’t produce new findings, run experiments, or conduct interviews. Google calls this “information gain” — the unique value your page adds beyond what already exists. AI content scores near zero here because everything it writes already exists somewhere else. It’s just remixed.

No author identity. AI content has no verifiable author. No LinkedIn profile. No publication history. No one who can be held accountable for the claims. Google’s Knowledge Graph connects author identities across the web. AI content can’t participate in that system.

Pattern detection. Google’s core updates in 2024 and 2025 specifically targeted mass-produced AI text. Sites that published hundreds of unedited AI articles saw their traffic decimated. The signals Google uses — repetitive phrasing, generic structure, absence of specific details — are exactly what AI produces at scale.

I saw this with my own competitors. A few sites popped up in my niche, clearly pumping out AI-generated Thailand guides. They’d rank for a few weeks, sometimes a couple of months. Then vanish. Every time. The content was too smooth, too generic, too obviously not written by someone who lives here.

Your Unfair Advantages Over Every LLM

If you’re a real person writing about topics you actually know, you already hold cards that AI can never draw. You just have to play them.

First-hand experience. This is the big one. When I write that the pool at a specific Koh Samui resort has a shallow section perfect for toddlers but the restaurant doesn’t open until 7pm which is too late for small kids — that’s something AI will never produce. Details from real life are your single biggest competitive advantage. Use them. Be specific. Name the place, the date, the weather, the problem you ran into.

Original observations. You see patterns AI doesn’t. You notice that the visa process changed last month. That a popular restaurant closed. That a new ferry route makes an island more accessible. This kind of real-time, boots-on-the-ground intelligence is what “information gain” actually means. And search engines reward it.

Your face and your name. A real author with a real bio, real credentials, real social profiles, and a real publication history builds trust that no anonymous AI article can match. Google’s entity resolution system connects your identity across platforms. When your name appears on your blog, on LinkedIn, in guest posts, on YouTube — that’s a signal AI can’t replicate.

Emotional resonance. Audiences are 2.5 times more likely to engage with content that has a personal voice and narrative depth. AI writes clean prose. Humans write things people actually want to read. Dwell time, scroll depth, return visits — these engagement signals feed directly into ranking algorithms.

As a blogger, I used to think these things were “soft” advantages. Nice to have, not essential. I was wrong. They’re now the primary reason human content outranks AI.

Entity Optimization: The Skill AI Can’t Copy

This is the most underrated tactic for outranking LLMs. And almost nobody in the blogging world is talking about it.

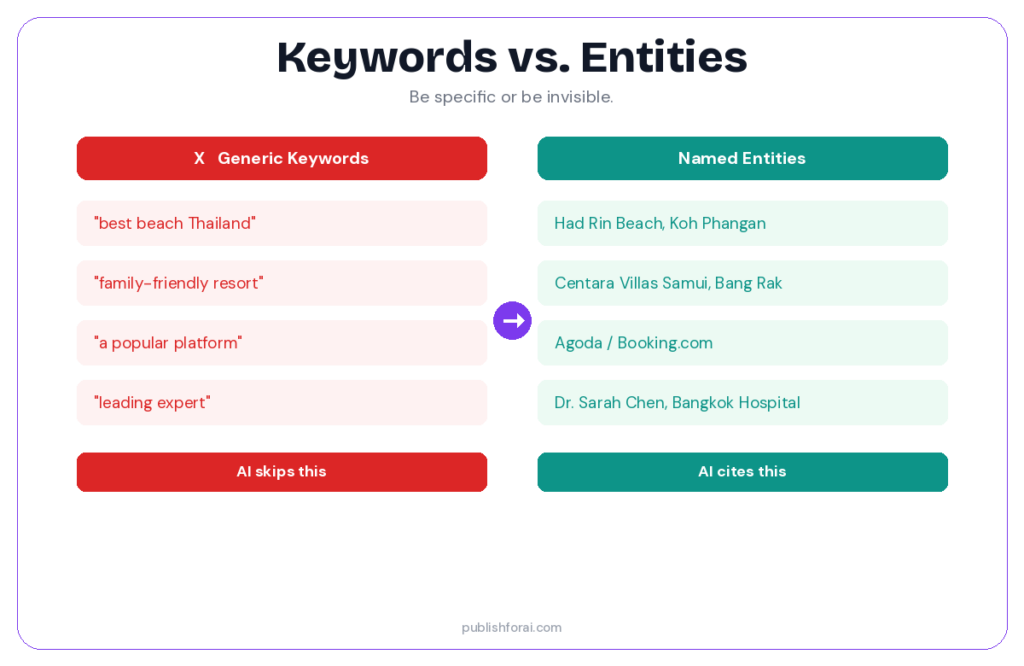

Google’s AI systems don’t read your page for keywords anymore. They check whether your content maps to entities in Google’s Knowledge Graph. An entity is a specific, verifiable thing: a person, place, brand, product, concept. If your content mentions entities clearly and connects them logically, it gets prioritized.

Research shows that pages with weak backlink profiles but clear entity signals outperform authoritative domains in AI Overviews. A page with 15 backlinks that explicitly says “Dr. Sarah Chen, pediatric nutritionist at Bangkok Hospital Samui” outranks a page with 1,500 backlinks that says “a leading health expert.”

AI content almost always fails here. It optimizes for keywords, not entities. It says “a beautiful beach in southern Thailand” when it should say “Had Rin Beach, Koh Phangan, Surat Thani province.” It says “a popular booking platform” when it should say “Agoda” or “Booking.com.”

What to do. Name everything. People, places, brands, dates, specific numbers. Connect related entities in your text. If you’re writing about a destination, mention the province, the nearest airport, the ferry company, the exact beach name including the Thai name. This is entity optimization, and it’s what separates content that gets cited from content that gets skipped.

Structure Your Content So AI Engines Pick You Over AI Content

Here’s the uncomfortable truth: AI-generated content is often better structured than human content. That’s one reason it ranks initially. It’s clean, consistent, and easy to parse.

You need to match that structural quality while bringing everything AI can’t: real information, real authorship, real experience.

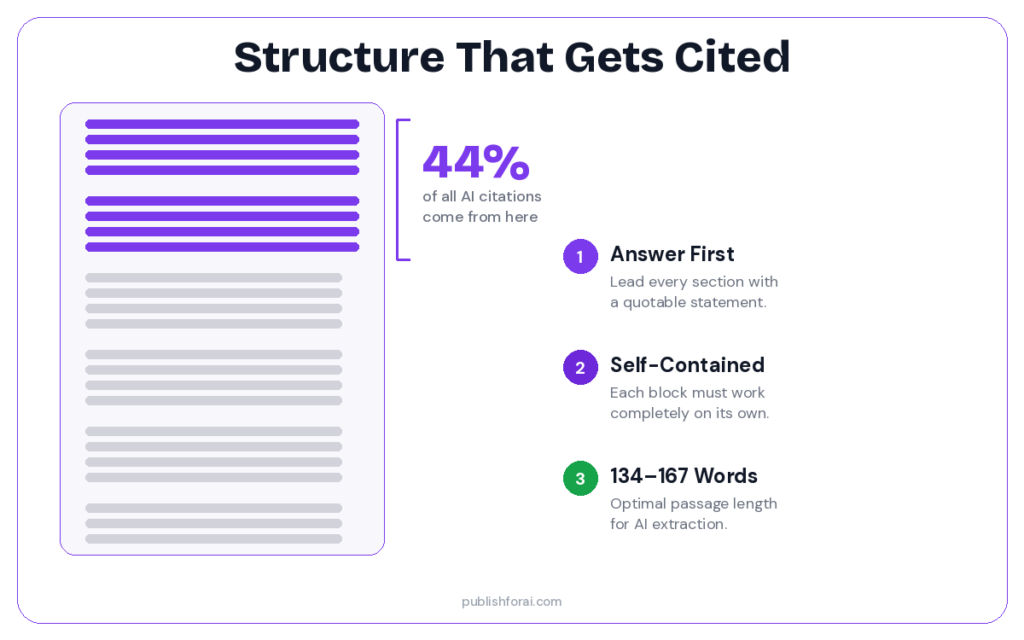

Answer first, always. Start every section with a direct, quotable statement. AI engines extract from the first sentences under each heading. 44% of all LLM citations come from the first 30% of a page. If your answer is buried in paragraph three, someone else’s intro gets quoted.

Self-contained sections. Each section should make complete sense if extracted on its own. No “as I mentioned above.” No “we discussed this earlier.” AI pulls passages, not pages. Make every passage standalone.

Semantic completeness. Research shows semantic completeness has the strongest correlation with AI citations at r=0.87. That means your content needs to fully answer the question it poses. Don’t tease. Don’t defer. Don’t say “it depends” without explaining what it depends on.

Concise passages. The optimal length for AI extraction is 134–167 words per section. Long enough to be useful. Short enough to quote cleanly. Build your article from these modular blocks.

When I started restructuring my Nomadmum posts this way — answer-first, self-contained, entity-dense — I noticed AI engines treating them completely differently. Perplexity started citing me. Google AI Overviews started pulling from my intros. The content was the same. The structure made it visible.

Go Multimodal: The One Thing AI Sites Almost Never Do

Pages that combine text, original images, video, and structured data see dramatically higher citation rates in AI Overviews. Why? Because multimodal content is hard to fake.

An AI content farm can produce 500 articles a day. But it can’t take an original photo of the view from a hotel balcony. It can’t record a video walking through a night market. It can’t create an infographic based on first-party survey data.

This is a massive competitive moat for real publishers.

On Nomadmum, my posts that include original photos consistently outperform those that use stock images. Not by a small margin. Google can tell the difference. Image metadata, EXIF data, uniqueness checks — these all signal authenticity.

What to do. Add original photos to every post. Create simple infographics with your own data. Embed video if you can. Use proper alt text with entity-rich descriptions. And implement schema markup so AI engines can connect your media to your content. Pages with full multimodal integration see up to three times more AI citations than text-only pages.

The Freshness Advantage: AI Content Goes Stale Instantly

Here’s something most publishers miss: AI-generated content starts aging the moment it’s published. And it never gets updated.

AI content farms publish at scale but they don’t maintain at scale. They don’t go back and update the restaurant that closed, the price that changed, the regulation that shifted. That’s your opening.

Perplexity indexes the live web in real time and heavily prioritizes recency. Google’s AI Overviews also favor content with recent, verifiable data. A blog post updated last week with current prices and fresh details will outrank a perfectly structured AI article from six months ago.

I update my top Nomadmum posts every three to four months. New prices, new details, new photos. I add visible “Last updated: March 2026” dates. It’s tedious. It’s also one of the strongest signals I have against AI-generated competitors who publish and forget.

What to do. Review your highest-value pages quarterly. Update statistics, verify links, add new information. Show the update date prominently. This signals to AI engines that your content reflects current reality — something static AI articles can never claim.

The Technical Foundation That Makes Everything Work

None of the above matters if AI engines can’t find or verify your content. The technical basics are non-negotiable.

- Schema markup. Article, FAQ, Author, Organization. Machine-readable proof of who you are and what your content covers. Sites with structured data see meaningfully higher citation rates. I ignored this for too long on Nomadmum — don’t repeat my mistake.

- AI crawler access. Check your robots.txt. GPTBot, PerplexityBot, ClaudeBot, GoogleBot — they all need access. Block them and you’re invisible. It’s that simple.

- Author pages. Dedicated pages for every author with real credentials, external links, and publication history. This feeds Google’s entity resolution and differentiates you from anonymous AI content.

- HTTPS and site trust. Secure site, clear contact info, visible correction policy, real about page. AI engines evaluate site-level trust before citing individual pages.

- Internal linking. Connect related content into topic clusters. AI evaluates topical depth across your site, not just individual pages. A single blog post signals nothing. Thirty interconnected posts on the same topic signal expertise.

These aren’t glamorous tasks. They’re the kind of stuff I used to put off because it felt like “technical SEO for big companies.” It’s not. It’s the foundation that separates blogs AI cites from blogs AI ignores.

Bottom Line

AI writes half the internet now. That sounds terrifying. But 86% of top-ranking pages are still human. 82% of AI citations go to human content. Consumer trust in AI content is falling, not rising.

The publishers getting crushed aren’t losing to AI. They’re losing because they’re creating content that’s indistinguishable from AI. Generic. Anonymous. No original data. No visible author. No proof of experience.

The ones winning are doing the opposite. They’re leaning into everything that makes human content different. Real experience. Named entities. Original media. Visible authorship. Regular updates. Structural precision.

When I started treating my Nomadmum blog not as “content” but as “evidence that a real person lives here and knows this stuff” — that’s when things started changing. AI can’t be a mum in Koh Samui who knows which restaurant has a kids menu. I can. And Google knows the difference.

Be the source AI can’t replicate. That’s the whole strategy.

Key Takeaways

- AI generates half of new web content, but 86% of top-ranking pages are still human-written. Volume doesn’t beat value.

- Pure AI content reaches Google’s top 3 in only 6% of cases. Human-edited content ranks 34% higher than unedited AI.

- Experience is the signal AI cannot fake. First-hand details, original photos, and specific observations are your strongest competitive edge.

- Entity optimization matters more than keywords. Name specific people, places, brands, and dates. AI content fails here by default.

- Structure your content for AI extraction: answer-first, self-contained sections, 134–167 words per passage, high semantic completeness.

- Multimodal content (text + original images + video + schema) sees up to 3x higher AI citation rates than text-only pages.

- Freshness beats static. Update your top pages quarterly and show visible “Last updated” dates. AI content farms publish and forget.

- Technical basics — schema, crawler access, author pages, internal linking — are the foundation that makes everything else work.

FAQ

Does Google Penalize AI-Generated Content?

Not automatically. Google’s official position is that content quality matters more than how it was created. However, Google’s 2024 and 2025 core updates specifically targeted mass-produced, low-quality AI content. Sites that published hundreds of unedited AI articles saw traffic collapse. The line is clear: AI as a tool is fine. AI as a replacement for human expertise is not.

What Percentage Of Top-Ranking Content Is Human-Written?

According to a Graphite analysis of 65,000 URLs, 86% of top-ranking pages on Google are still human-authored. Among AI assistants like ChatGPT and Perplexity, 82% of cited sources are human-written. Despite AI producing roughly half of new web content, human content dominates the positions that drive traffic and citations.

Can Small Bloggers Outrank AI Content Farms?

Yes. AI content farms produce volume but lack experience signals, original data, and verifiable authorship. Small bloggers with genuine niche expertise, topic depth, and regular content updates can outperform generic AI content on specific topics. Google and AI engines evaluate page-level quality, not just domain size.

What Is Entity Optimization And Why Does It Matter?

Entity optimization means structuring your content around specific, verifiable things — named people, places, brands, products, and dates — rather than generic keywords. Google’s AI systems map content to its Knowledge Graph using entities. Pages with clear entity signals outrank pages with higher backlink counts in AI Overviews because the system values structural clarity over historical authority.

What Is Information Gain And How Does It Help Beat AI Content?

Information gain measures the unique value your page adds beyond what already exists on the web. Since AI-generated content is synthesized from existing sources, it scores near zero on information gain. Content with original data, first-hand observations, or new analysis provides something genuinely new — and search engines reward that novelty with higher rankings.

How Long Should Sections Be For AI Citation?

Research shows the optimal passage length for AI extraction is 134–167 words per section. Each section should be self-contained and start with a direct, quotable answer. Semantic completeness — fully answering the question within the section — has the strongest correlation with AI citations at r=0.87.

Does Multimodal Content Really Get More AI Citations?

Yes. Pages that combine text, original images, video, and structured data see significantly higher selection rates in AI Overviews. Full multimodal content with schema integration can receive up to three times more citations than text-only pages. Original media is especially valuable because it signals authenticity that AI content farms cannot replicate.

How Often Should I Update Content To Beat AI Articles?

Review high-value pages every three to four months. Update statistics, verify external links, add new information, and show visible “Last updated” dates. Perplexity especially prioritizes recency since it indexes the live web. AI content farms rarely maintain their articles, so consistent updates give human publishers a compounding advantage over time.

Is A Hybrid AI-Human Approach Better Than Pure Human Writing?

For most publishers, yes. Using AI for research, outlines, and drafts while adding human experience, original insights, and editorial judgment produces content that ranks 34% higher than unedited AI. The key is that the human layer adds what AI cannot: first-hand knowledge, emotional depth, and verifiable authorship. Think of AI as a research assistant, not a ghostwriter.

What’s The Single Most Important Thing To Outrank LLMs?

Be undeniably human. Add details only a real person would know. Put your name and face on your work. Show proof of experience. The entire reason human content still dominates search rankings is that AI cannot replicate real-world knowledge, genuine expertise, and verified identity. Make those signals impossible to miss.